This is an investigation into some aspects of the way the C programming language creates meaning. In any formalized language, meaning is created by a tension between a community of speakers and the language's formal definition. In the case of C, this community preceded and presided over the formal definition in such a way that the formal definition itself embodies this tension. Because of this, C has a relatively unique view on how programming languages work, and how language in general should work. Specifically, I will argue that the 1989 ANSI C standard introduces the concept of abstraction by ambiguity into formal language specifications. Paradoxically, this ambiguity allows the knowledgeable programmer to be more specific than would otherwise be possible, while retaining the extensional benefits of abstraction. This has implications for philosophy of language in general, which I will briefly address.

This work is situated in the emerging field of critical code studies (Marino). Although there has been related work dating all the way back into the 80s and 90s (Knuth; Winograd; Landow; Kittler), most studies that self-consciously look at code itself from a perspective that goes beyond computer science are a very recent phenomenon (Fuller; Chun). If much of my investigation seems overly broad, then, that's to be expected: just as a Polaroid photograph develops with broad splotches of color, only acquiring precision at the end of the process, likewise a new investigation must be satisfied with the faith that its clumsiness will be turned into precision with time. Many things herein are assertions with little corresponding argument, yet assertions which nevertheless to me seemed interesting enough to present for consideration in the hope that they might function as depth-soundings for future navigation.

C has a relatively unique history among programming languages. It began life as CPL, or Combined Programming Language, a language inspired by ALGOL 60 and APL. In the early 60s, CPL was developed jointly (hence "Combined") between the Mathematical Laboratory at the University of Cambridge and the University of London. The fate of CPL was mostly determined by its bulky design: it was almost a decade before even a rudimentary compiler was written, and it never achieved practicality (Burstall 52). In the mid-60s, BCPL ("Bootstrap CPL") was designed as a language in which to create a compiler for CPL. It soon had a well-defined, practical, working compiler, and began to be used as a language in its own right. In the Multics project, BCPL became the language of choice among the AT&T Contingent (including Ken Thompson), as it was more manageable and lightweight than the officially sanctioned PL/I. As is recounted elsewhere, Multics fell apart and Ken Thompson created Unix in assembler on a PDP-7 (Ritchie, "Evolution of UNIX"). Thompson wanted a high-level language, so around 1969, he designed B, a scaled down simplified version of BCPL that could fit on the extremely bare PDP-7 (Ritchie, "Development of C" 2). Somewhere in the process of porting B (and Unix) to the PDP-11, Dennis Ritchie created C, as he says "leaving open the question whether the name represented a progression through the alphabet or through the letters in BCPL" ("Development of C" 8).

C was a compiler before it was a specification. Soon afterwards, a Unix manual page was created. From the manual page, an informal specification was written, which in 1978 became Appendix A to Kernighan and Ritchie's book The C Programming Language. Until 1989, this was to serve as the de facto standard for C, known as "K&R C." Around the same time (1978-1979), PCC (Portable C Compiler) was written by Steve Johnson, then ported to many, many architectures and systems, in the Unix world and beyond (Johnson). Before long, there was at least one—more usually at least two—C compiler implementations for a given system. There was a lot of divergence among implementations, as even prior to the publication of K&R C, PCC as well as Ritchie's compiler had already developed additional features and alterations to the documented behavior. This divergence only increased as the language continued to develop.

The important thing to note in this history is how different the evolution of C was compared to the development of a typical programming language. Most programming languages—excepting a few derived from and dependent on C—were either (1) developed in a relatively short time period, by a single person or small group, in a way that might be characterized as a derivation from first principles,1 or (2) they were developed by committee from a specific list of features.2 It is difficult to reconcile C's history with either of these models. C was "designed" by one person—Dennis Ritchie—but his language was supposed to allow the easy reuse of source code from many programs already written in B, a constraint which overshadowed the design. Further, C as a language did not stay put for very long, but quickly changed according to the changing demands of the user base and code base. As C was ported to non-Unix architectures and systems which weren't even thought of during the design process, the language was modified for use with those environments. In short, one cannot examine the origins of C without confronting that which is so conspicuously absent from the origins of most programming languages: a community of speakers.

In the early 80s, a number of institutions began to see the need for a C standard. By 1983, CBEMA (The Computer and Business Equipment Manufacturers Association) established committee X3J11 to standardize C.3 After many drafts and many comments were addressed, a standard was finally produced in 1989, which was submitted to ANSI to become ANSI Standard C (or "C89").

In describing the purpose of ANSI C, the Rationale for ANSI C states "The X3J11 charter clearly mandates the Committee to codify common existing practice [my emphasis]" (X3J11 1). But as we have noted, "common existing practice" was diverging. In some cases, the X3J11 committee resolved this by privileging one of a set of practices as the standard. In other cases, the committee created a new language feature to replace older implementations. But often, the committee standardized divergence itself, in the form of "unspecified," "undefined," and "implementation-defined" behavior. The Rationale for ANSI C defines these terms as follows:

The terms unspecified behavior, undefined behavior, and implementation-defined behavior are used to categorize the result of writing programs whose properties the standard does not, or cannot, completely describe.

[...]

Unspecified behavior gives the implementer some latitude in translating programs. This latitude does not extend as far as failing to translate the program.

Undefined behavior gives the implementer license not to catch certain program errors that are difficult to diagnose. It also identifies areas of possible conforming language extensions: the implementer may augment the language by providing a definition of the officially undefined behavior.

Implementation-defined behavior gives an implementer the freedom to choose the appropriate approach, but requires that this choice be explained to the user. Behaviors designated as implementation-defined are generally those in which a user could make meaningful coding decisions based on the implementation definition. Implementers should bear in mind this criterion when deciding how extensive an implementation definition ought to be. As with unspecified behavior, simply failing to translate the source containing the implementation-defined behavior is not an adequate response. (X3J11 6)

These three terms differ mostly in terms of documentation. Unspecified or undefined behavior is arbitrary and need not be documented (but often is). Implementation-defined behavior must be documented. All three terms specify that the implementation (i.e. the compiler author) is

almost completely free in their choices. In the case of unspecified or implementation-defined behavior, something specific must happen; in the case of undefined behavior, there are absolutely no requirements.

The community's reaction to this has generally been to discourage use of language features in these classes as much as possible. This was perhaps most famously stated by John Woods in a post to comp.std.c: "Permissible undefined behavior ranges from ignoring the situation completely with unpredictable results, to having demons fly out of your nose" (Woods). His point is twofold: first, that literally anything can happen, not just anything among a seemingly logical set of choices; second, just don't use undefined behavior. But in practice, so much in ANSI C is undefined, unspecified, or implementation-defined that few programs can avoid these categories, and many make active use of them.

To illustrate a case where undefined behavior is used productively, look at the following example (figure 1). This code fragment is a function that atomically4 exchanges one floating point value for another. The function is built on an already-existing function atomic_xchg which atomically exchanges two integers.

Line 1 defines the function, which takes two variables, the address of an (atomic) floating point value, v, and the value to exchange with the value in the address, new. Lines 2 through 5 define a variable, pun, which can have either a floating point or an integral value. Or so the language definition says. In an implementation which stores both floating point and integral values as 32 bits, which can be located in an arbitrary spot in memory, this reserves 32 bits of memory and gives two interpretations of it, pun.f (floating point) and pun.i (integer). This is a technique called "type punning": interpreting the representation of an object in more than one way. In this case, we're interpreting the representation of a floating point value, a set of 32 bits, as the 32 bits representing an integer type. Line 6 sets that variable, or area of memory, equal to the value of new. According to the standard, now pun.i is undefined, but this is precisely the value we use. Line 8 makes two type puns: first, by using pun.i as described above; second, by casting, interpreting v, which is the address of an atomic floating point value, as the address of an atomic integer value. Since memory is just a bit space, this is just a matter of interpretation. Lastly, in line 11 pun.f is returned, interpreting the integral value returned by atomic_xchg as a floating point value.

In most cases, type punning involves violating the constraints of a language in some way. As my comments above make clear, type punning is not supported by the C language. In fact, since punning always involves the representation and not the semantics, it is only truly possible outside of the defined semantics of a given language. In most languages, what this means is that type punning is simply not possible; in C it is relatively common.

C is a high-level, portable language, and there is no part of the above code fragment that is not written in pure C, yet nevertheless understanding what it will do requires machine-specific knowledge. We must be sure that the physical representation of an int and a float can be exchanged with no loss of data. This will not work, for example, on any machine where float and int are different sizes. More subtly, it will not work on any machine that has separate registers for floating-point and integral values and optimizes the variable pun to a register. Type punning is not sanctioned by ANSI Standard C, but neither is it prohibited: it is undefined.5 And this ambiguity in the definition of C is precisely what enables a high-level language to reach the physical machine.

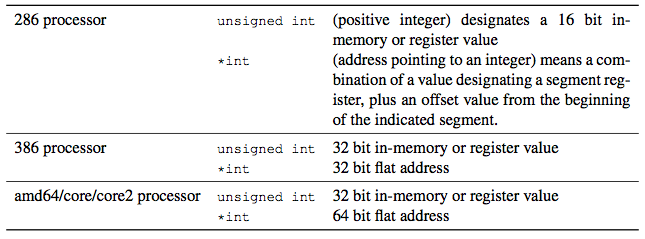

The Rationale for ANSI C states: "To help ensure that no code explosion occurs for what appears to be a very simple operation, many operations are defined to be how the target machine's hardware does it rather than by an abstract rule" (3). Thus, in C, certain symbols often designate physical objects rather than abstract concepts. For example, type specifiers are almost universally linked to a physical unit of memory that is "natural" for a given processor (see table 1). Most of the time, then, lexemes in C designate a specific physical instance of a class, not the class itself.

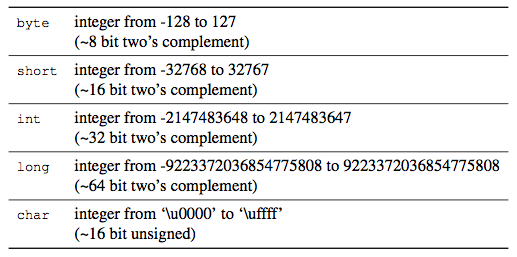

For contrast, I'll look at how Java deals with type specifiers (table 2). In Java, int designates a clear, mathematical object, not a physical object. As the table indicates, there are physical objects present in many machines that roughly correspond to the semantics of their mathematical counterparts, but this is not true of all machines. Whereas C type specifiers attempt to make the abstraction correspond with the physical object it indicates, in Java it is the other way around: the physical objects must somehow conform with abstract, defined semantics, often requiring disproportionate amounts of machine code. This characterization can be extended beyond our simple example of type specifiers to all language constructs. Whereas in C the machine is the real object the programmer intends, in Java the machine is an accidental appendage to the semantics of the language.

Using C on any given machine and compiler, one acquires an intimacy with the machine which is almost completely absent in most high-level languages. So much so, that the need to manage machine details has often been a source of criticism of C; certainly this is the major reason Java is defined as it is. But C is still very much a high-level language, and most programs written in C, though the machine shines through, will also compile and make sense on a different machine. When Oracle decided to port their eponymous database (written in C) to Linux in 1998, a reporter asked an engineer what technical challenges they had to surmount. The engineer replied, "we typed 'make' " (Raymond Ch. 17). This level of portability is mostly possible, however, because there is a similarity between the machines themselves. That is, in C, as in most living languages, a single lexeme is an adequate description of more than one object because the objects themselves are similar. Where this similarity ceases, portability ceases.

ANSI C is ambiguous, and it is because of this ambiguity that it can be specific where a specific machine is indicated. Ambiguity, then, is responsible for the references in C that reach out to the machine; ambiguity is a type of abstraction by which semantic elements of C acquire meaning.

The first inklings of this thought came from a comment Andrew Sorenson made in the Critical Code Studies Working Group earlier this year (2010). He demonstrated a livecoding musical performance, which was thoroughly peppered with calls to random. When this was brought up, Sorenson commented: "obviously randomness is, by definition, indeterminate. However, it can also be thought of as an abstraction layer. 'Random' allows you to abstract away detail without requiring a complete model of the underlying process" (Sorenson). When one asks for a random number, one always gets just that: a single, specific number. One does not obtain numberness, or numbers in general. Yet nevertheless, a random number generator in some way could be said to refer to all numbers, because it potentially refers to any number. The C language often works in a similar way. Its indeterminacy is precisely the indeterminacy of the machine it runs on: the character of that machine is not yet determined. By abstracting through ambiguity, C's object is not calculation, algorithms, or even computers, but always a single, specific machine.

Of course, there is a 219 page standard for C, and not all of it refers the reader to undefined behavior. Most of the C standard functions in the way standards for other programming languages function: by defining behavior.

As Derrida would tell us, definitions consist of other words, which are themselves elsewhere defined, and so on; the real is never reached by definition. The formalist view of the foundations of mathematics is similar: axiomatic systems are neither true nor false, but can only be valid based on internal consistency. There is never one such axiomatic system which is privileged, but rather many possible systems, some of which are useful, some which are not (though why certain sets of axioms are useful is an open question).

Neither the formalist view nor the Derridean view is universally accepted, of course; there are many other ways of looking at language. What we learn contrasting C with other languages, however, is that the question of how language and abstraction function is not simply a theoretical question to be answered after the fact, but a specific, practical question; we shouldn't ask "how does language work?" but "how does this piece of language work?"

In C we see two types of abstraction, abstraction by ambiguity, and abstraction by definition / replacement (here I'm thinking of the Derridean "supplement"). Abstraction by ambiguity designates specific objects. Its universality, then, depends on commonalities of the objects, not features of the language. Abstraction by definition / replacement is supposedly more clear, and more universal, yet it never reaches the physical. Tentatively, we might say that abstraction by ambiguity tends to appear with living languages—languages that grow through practical use within an environment—whereas abstraction by definition / replacement tends to appear with codic languages—languages that emerge fully formed, languages used in language games, languages where a formal specification makes sense. As C stands somewhere between a living and a codic language, it participates both in abstraction by ambiguity and in abstraction by definition / replacement.

One thing that is important to note here, considering the task of critical code studies, is that when it comes down to it, it is unlikely that any language can be described as wholly codic or wholly living; these are modes in which a given language participates. We should, then, carefully consider whether what we are studying is a simple object—code—or a mode in which all language participates—the codic.

The idea of abstraction by ambiguity has interesting intersections with one of the standard problems of philosophy of language: how is it possible for words to designate objects in the world, when all they ever seem to do is refer to classes of objects? In Hegel's Phenomenology of Spirit, this problem is what drives Consciousness away from sense-certainty into philosophy and history, what drives Consciousness towards idealism. At the very end of the section on Consciousness, Hegel writes:

They [those who argue for sense-certainty] speak of the existence of external objects, which can be more precisely defined as actual, absolutely singular, wholly personal, individual things, each of them absolutely unlike anything else; this existence, they say, has absolute certainty and truth. They mean "this" bit of paper on which I am writing—or rather have written—"this"; but what they mean is not what they say. If they actually wanted to say it, then this is impossible, because the sensuous This that is meant cannot be reached by language, which belongs to consciousness, i.e. to that which is inherently universal. In the actual attempt to say it, it would therefore crumble away; those who started to describe it would not be able to complete the description, but would be compelled to leave it to others, who would themselves finally have to admit to speaking about something which is not. They certainly mean, then, this bit of paper here which is quite different from the bit mentioned above; but they say "actual things," "external or sensuous objects," "absolutely singular entities" and so on; i.e. they say of them only what is universal. Consequently, what is called the unutterable is nothing else than the untrue, the irrational, what is merely meant. (s.110 / p.66)

This passage pivots on the idea of the universality of language. Because language (and the stuff of consciousness) is universal, Hegel argues, one is unable to bring specific objects in this world to universality (hence to consciousness), except insofar as they already participate in it. Knowledge depends only on the universal, and the specific is that which is false, that which contains no knowledge.

Having gone over our two types of abstraction, the question we might ask here is: what kind of universality does language have? If language can be universal in the same way that C is portable, or in the same way that a random number generator can refer to a number, then is universality actually opposed to specificity at all? Perhaps, rather than by Hegelian, codic universality, consciousness and language is better characterized by portability: it is ambiguous yet takes on specific meaning in specific situations. In that case, " 'this' bit of paper on which I am writing" would be undefined except in context, and in context it would always acquire a specific meaning of a specific object.

This is a rather cursory attempt at dealing with an old and complicated problem. However, the broader point here is that for a practitioner of critical code studies, being critical of code is not enough; code is an object that must also critique us and our theories.

Returning to practical matters, we are left with a question that has acquired a certain centrality since I left it unanswered when exploring the history of ANSI C: if so much of the C language was defined through use, and if the C standard left so much of the C language undefined, why standardize at all? What interests needed this standard, and why? I will examine three major reasons.

The first has already been mentioned: the de facto standard K&R C no longer described C as it was being used. The language needed to be properly documented. Secondly, several inadequacies of C (especially in the C preprocessing phase) were addressed in different ways by different compiler writers. Some were left unaddressed until ANSI Standard C. There was a need for new language features. The third reason was, in the words of Ritchie, "[. . . ]the incipient use of C in projects subject to commercial and government contract meant that the imprimatur of an official standard was important" ("Development of C" 10).

There are two main groups here: engineers on the one hand, and commercial, military, and business institutions on the other.6 The first two needs are the needs of the engineers: they needed documentation and a somewhat consistent feature-set. More or less, the engineers would probably have been satisfied with a new version of K&R C. Commercial, business, and military institutions, however, needed a strict standard.

For those with intimate knowledge of the physical domain in which their language participates, ambiguity is easily resolved into a map of the world. The widespread use of C in a university setting shows that its use may even help to bring the initiated into contact with that world and teach them how it functions. The engineers know their machines—and when they don't, their programs are inefficient and buggy. No language specification is going to free them from the need for this knowledge. Engineers, then, can live with some amount of ambiguity.

In contrast, business, government, and military needs do not correspond with the need to produce an accurate description of the world as it exists. They may also need such a description, but what they require is a way to ensure the regularity, the predictability of the world. They need to enforce regularity. Codifying language is one way to do that. I'm especially thinking of Nietzsche's second essay in the Genealogy of Morality here: promises require regularity. Debt and credit, contract law, as well as military command, all require a world that is regularized by language. The codic is the most modern way to produce that world.

The Military Standard on Specification Practices (MIL-STD-490A) has this to say about the language of specifications:

The paramount consideration in a specification is technical essence, and this should be presented in language free of vague and ambiguous terms and using the simplest words and phrases that will convey the intended meaning. Inclusion of essential information shall be complete, whether by direct statements or reference to other documents. (Department of Defense 13-14)

But the C standard is neither complete nor unambiguous. The Rationale is explicitly "deliberately ambiguous" in at least one case: references to other sections. Unless otherwise specified, these references refer to either the standard or the Rationale. Though my interpretations here are my own, I don't think that the core members of X3J11 would find them especially foreign.

By producing a standard that regularizes irregularity, that defines things as undefined, the ANSI C committee subverted the whole idea of a standard, especially with regards to "completeness." While they produced a theoretical subset of the language that fits the needs of military and business, most engineers don't stick to it, but instead pay attention to what works, to what they know about actual C compilers, actual machines, and how they work. Ambiguity thrives.

1. LISP, developed by McCarthy in 1960 (McCarthy) is a prime example of adherence to first principles in language design, as is Smalltalk (Kay), and Prolog (Colmerauer). These days, perhaps Ruby can also be placed in this camp.

2. Ada is the paradigmatic example (Whitaker). Also of note are Cobol (Sammet) and PL/I (Radin). As for present-day languages, Perl 6 can perhaps be placed here.

3. ANSI (American National Standards Institute), doesn't create standards, but ratifies stan- dards created by other organizations who follow an approved process of community review.

4. For an operation to be "atomic," there must be no possibility that the computer at any point can be interrupted while in an intermediate state. From the point of view of any thread of execution, the operation has either happened completely or it hasn't happened at all.

5. Making figure 1 a "conforming program," but not a "strictly conforming program" (X3J11 6-8).

6. In addition, there was a third group with needs distinct from both of these: the scientific community. However, addressing the way this community's needs corresponded with the stan- dardization process would mean attempting to relate the need for producing scientific truth with the realities of ambiguity, something I am in no way prepared to address here.

Burstall, Rod. "Christopher Strachey—Understanding Programming Languages." Higher-Order and Symbolic Computation 13 (2000): 51-55. Web.

Chun, Wendy. Programmed Visions: Software and Memory. Cambridge, MA: The MIT Press, forthcoming 2010. Print.

Colmerauer, Alain and Philippe Roussel. "The Birth of Prolog" In History of Programming Languages”"II. Ed. Thomas J. Bergin, Jr. and Richard G. Gibson, Jr. New York: Association for Computing Machinery, 1993. Web.

Department of Defense. Military Standard: Specification Practices. MIL-STD-490A. Washington, DC, 1985. Web.

Fuller, Matthew, ed. Software Studies: A Lexicon. Cambridge, MA: The MIT Press, 2008. Print.

Hegel, G.W.F. Phenomenology of Spirit. Trans. A.V. Miller. Oxford: Oxford University Press, 1977. Print.

Johnson, Steve C. "A Portable Compiler: Theory and Practice." In Proceedings of the 5th ACM SIGACT-SIGPLAN Symposium on Principles of Programming Languages. New York: Association for Computing Machinery, 1978. Web.

Kay, Alan. "The Early History of SmallTalk." In History of Programming Languages”"II. Ed. Thomas J. Bergin, Jr. and Richard G. Gibson, Jr. New York: Association for Computing Machinery, 1993. Web.

Kittler, Friedrich. "There is no Software." CTHEORY. 1995. Web. <http://www.ctheory.net/articles.aspx?id=74>.

Knuth, Donald. "Literate Programming." The Computer Journal 27.2 (1984): 97-111. Web.

Landow, George P., Ed. Hypertext: The Convergence of Contemporary Critical Theory and Technology. Baltimore: Johns Hopkins University Press, 1992. Print.

Marino, Mark. "Critical Code Studies." Electronic Book Review. 2006. Web. <http://www.electronicbookreview.com/thread/electropoetics/codology>.

McCarthy, John. "Recursive Functions of Symbolic Expressions and their Computation by Machine, Part I." Communications of the ACM 3.4 (April 1960): 184-195. Web.

Radin, George. "The Early History and Characteristics of PL/I." In History of Programming Languages. Ed. Richard L. Wexelblat. New York: Association for Computing Machinery, 1978. Web.

Raymond, Eric S. The Art of Unix Programming. Boston, MA: Pearson Education, 2004. Web. <http://catb.org/esr/writings/taoup/html/>.

Ritchie, Dennis. "The Development of the C Language." In History of Programming Languages”"II. Ed. Thomas J. Bergin, Jr. and Richard G. Gibson, Jr. New York: Association for Computing Machinery, 1993. Web. <http://cm.bell-labs.com/cm/cs/who/dmr/chist.pdf>.

— "The Evolution of the UNIX Time Sharing System." In Language design and programming methodology : proceedings of a symposium held at Sydney, Australia, 10-11 September 1979. Berlin: Springer-Verlag, 1980. Web. <http://cm.bell-labs.com/cm/cs/who/dmr/hist.pdf>.

Sammet, Jean E. "The Early History of COBOL." In History of Programming Languages. Ed. Richard L. Wexelblat. New York: Association for Computing Machinery, 1978. Web.

Sorenson, Andrew. "Re: Week 5." On critcode.ning.com. March 3, 2010. Web.

Whitaker, William A. "Ada—the Project: The DoD High Order Language Working Group." In History of Programming Languages”"II. Ed. Thomas J. Bergin, Jr. and Richard G. Gibson, Jr. New York: Association for Computing Machinery, 1993. Web.

Winograd, Terry and Fernando Flores. Understanding Computers and Cognition: A New Foundation for Design. Norwood, NJ: Ablex Corporation, 1987. Print.

Woods, John F. "Re: Why is this legal?" In comp.std.c 25 February 1992. Usenet.